What is a feedforward neural network (FNN)?

Feedforward neural networks (FNNs) are simple neural networks that pass information one layer at a time, moving from one layer to the next in a sequential order. They can be categorized into single-layer FFNs and multi-layer FFNs, each of which serve different purposes in deep learning.

What is a feedforward neural network (FNN)?

A feedforward neural network (FNN) is an artificial neural network that operates without any feedback loops. This network type is considered one of the simplest forms of artificial neural networks because it only works in a forward-moving direction. Deep feedforward neural networks play an essential role in creating models in the fields of deep learning and artificial intelligence. Depending on the number of layers used, FNNs can be classified as single-layer or multi-layer networks.

- Get online faster with AI tools

- Fast-track growth with AI marketing

- Save time, maximize results

How does a feedforward neural network work?

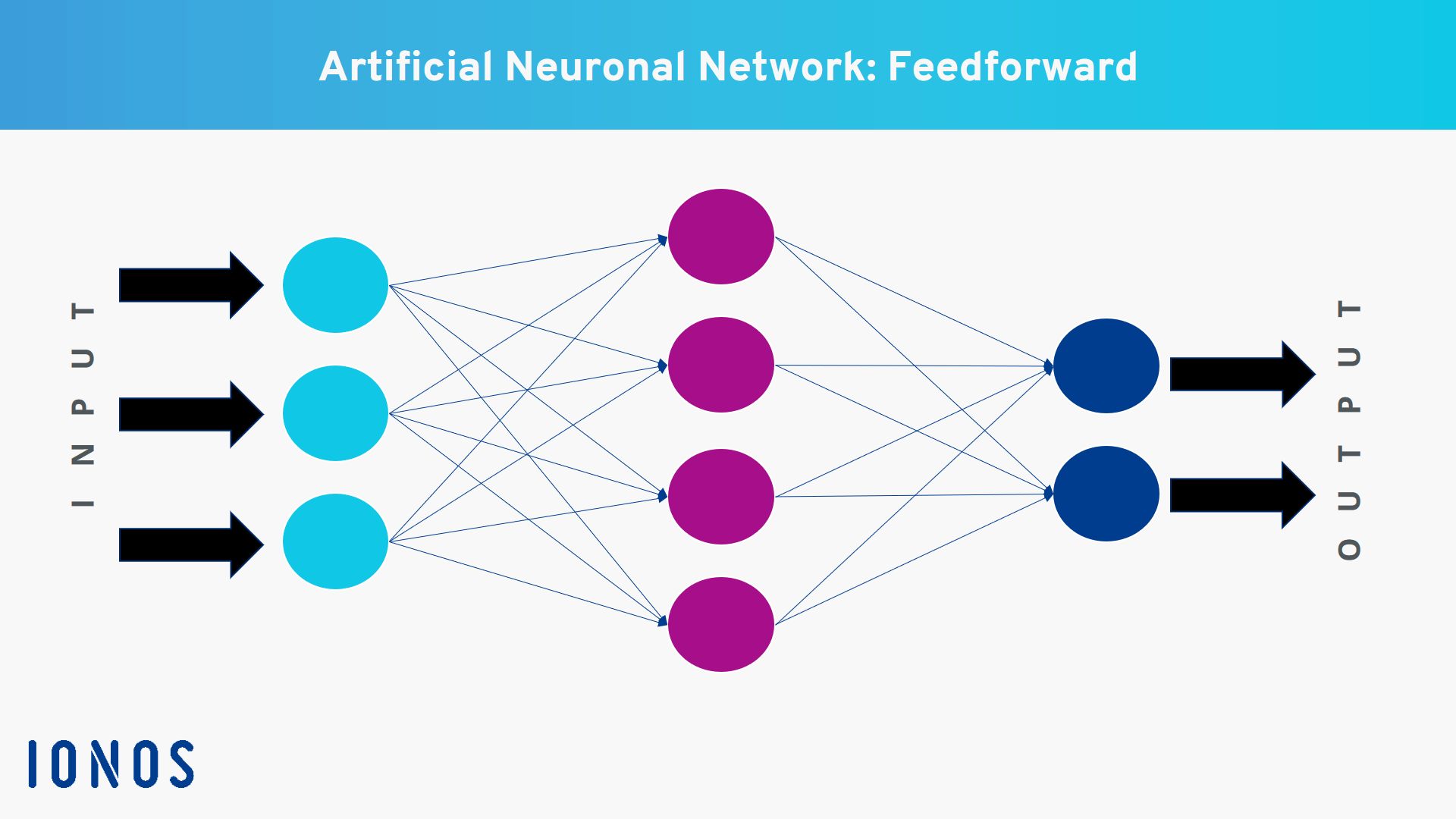

Like all artificial neural networks, feedforward neural networks are inspired by the human brain, which processes information through a network of neurons. FNNs have a minimum of two layers: an input layer and an output layer. Between these layers, there can also be additional layers, known as hidden layers. Each layer is only connected to the layer directly above it. The connections between the layers are formed through edges, a term taken from graph theory.

- Input Layer: The input layer receives all the input data that is fed into the network. Each neuron in this layer corresponds to a feature in the incoming data.

- Hidden Layers: Between the input and output layers, there can also be hidden layers. Each hidden layer consists of multiple neurons that connect the input and output layers.

- Output Layer: The output layer of the network produces the final result.

In a feedforward neural network, information only flows in one direction, from the input layer to the output layer. A set of inputs is introduced into the input layer, and then the neurons in this layer take the data and apply weights to them. In a single-layer FFN, the neurons pass the information onto the output layer. In a multi-layer FFN, however, the information is first passed to the hidden layers. Since this process can’t be seen, these layers are referred to as “hidden.” Once the data reaches the hidden layers, it’s reweighted. The processed information is then delivered as the final result by the neurons in the output layer.

Throughout the process, the weighted information in each step is added up. Then, a threshold value is applied to determine whether a neuron should pass the information along or not. This threshold is typically set to zero. In a feedforward neural network, there are no backward connections between layers, meaning each edge only links to the layer that directly follows it.

What are the most important use cases for FNNs?

There are many potential uses for feedforward neural networks. These types of networks are particularly useful for processing and linking large volumes of unstructured data. Below are some examples of when FNNs are used:

- Speech recognition and processing: Feedforward neural networks can be used to convert text into speech or for converting spoken language into written text.

- Image recognition and processing: FFNs can analyze images and identify certain features. This can be used to digitize handwritten notes, for example.

- Classification: A feedforward neural network can classify data based on predefined parameters.

- Forecasting: Feedforward neural networks are also great for making predictions, for example, for events or trends. They can be used in weather prediction, early warning systems in disaster management, space exploration and defense.

- Fraud detection: These networks can play an important role in identifying fraudulent activities or patterns.

What’s the difference between a feedforward neural network and a recurrent neural network (RNN)?

While both networks use neurons to process information and pass it from an input layer to an output layer, a recurrent neural network (RNN) can also send information backwards. An RNN has connections that allow information to travel back and forth through the layers, giving the network feedback loops where information can be stored.

Such networks are especially useful for determining results when context is important, such as in text processing. Take the word “bank”. This could refer to a financial institution or the area bordering a river. To determine the correct meaning, it’s necessary to know what the context is. Unlike RNNs, feedforward neural networks don’t have a mechanism to store this type of information.