CAP Theorem

Cloud computing has enriched the digital world with many new possibilities. Providing computing resources via the internet enables in particular rapid innovation, flexible resource use, and respective scaling options which can be individually configured to your own requirements. However, the CAP theorem demonstrates that guaranteeing this flexibility results in compromises being made in other areas. We will explain what the CAP theorem is (also referred to as Brewer’s theorem) and show where this theorem about distributed systems can be observed in practice.

- Cost-effective vCPUs and powerful dedicated cores

- Flexibility with no minimum contract

- 24/7 expert support included

What is the CAP theorem?

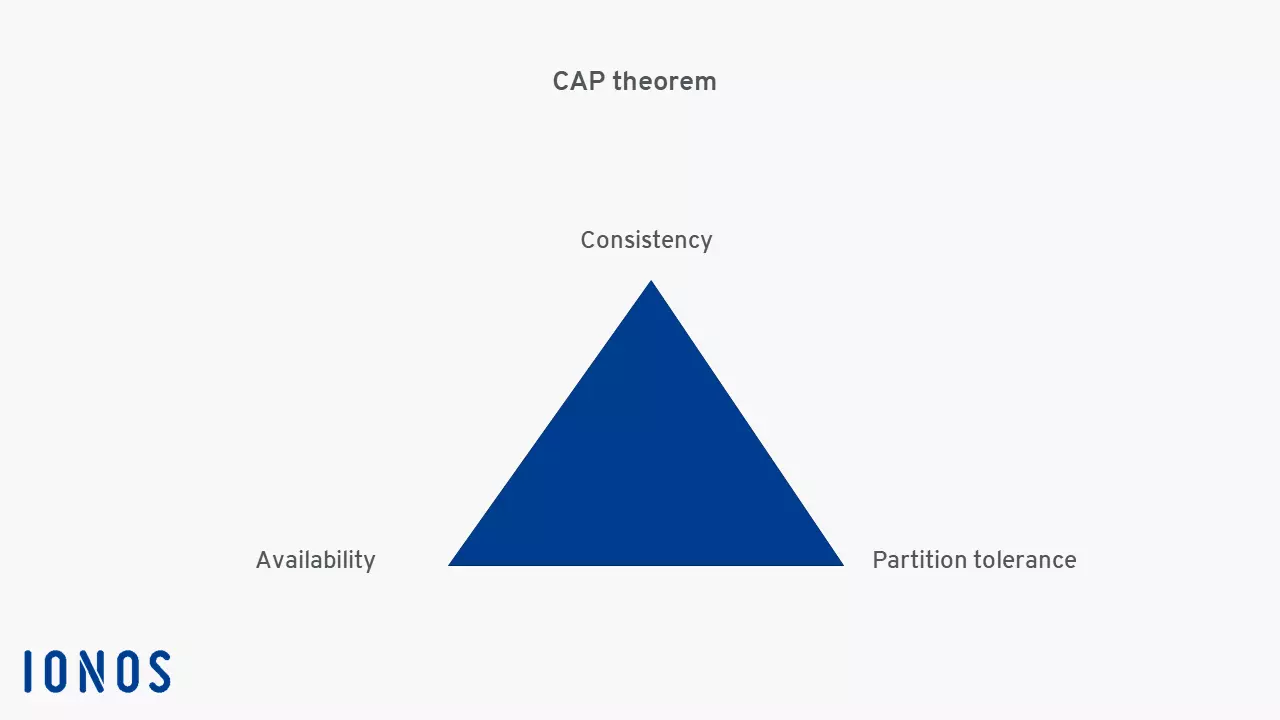

The CAP theorem states that it is impossible to provide or guarantee more than two out of the following three properties at the same time in a distributed system:

- Consistency: All clients see the same data at the same time.

- Availability: All clients can perform read and write accesses at any time since the system always responds.

- Partition tolerance: The system can continue to operate as a whole even if individual nodes fail or can no longer communicate with each other.

The CAP theorem is based on a conjecture set forth by the computer scientist Eric Brewer which he presented at the 2000 Symposium on Principles of Distributed Computing (PODC). This principle on the limitation of properties in distributed systems is also referred to as Brewer’s theorem. In 2002, Seth Gilbert and Nancy Lynch of MIT published an axiomatic proof of this conjecture and, thus, established it as a theorem.

Nowadays, when building a new distributed system, this theorem is used as a reference, and a basic model is chosen that focuses on two of the three properties. Groups of independent computers in a single system can generally be divided into three categories according to the CAP theorem:

- CP system (consistency and partition tolerance)

- AP system (availability and partition tolerance)

- CA system (consistency and availability)

The CAP theorem in practice

To clarify the idea behind the CAP theorem, the following examples of distributed systems will prove the principle’s validity. In addition, they emphasize in what respect Brewer’s theorem is valid.

Example of an AP system: the domain name system

A well-known example of an AP system is the DNS (i.e. the domain name system). This central network component is responsible for resolving domain names into IP addresses and focuses on the two properties availability and partition tolerance. Due to the large number of servers, this system is virtually available all the time. If a DNS server fails, the next one will take its place. However, consistency is not guaranteed with the DNS according to the CAP theorem. If a DNS entry is modified, it may take several days before the modification is passed on to the system hierarchy and becomes visible for all clients.

Example of a CA system: relational database management systems

Database management systems based on the relational database model are a good example of CA systems. These database systems are primarily characterized by a high level of consistency and also strive to achieve the highest possible level of availability. However, under certain circumstances, availability can be reduced in favor of consistency. Meanwhile, partition tolerance plays a less important role.

Example of a CP system: financial and banking applications

High availability is one of the most important properties in the majority of distributed systems which is why CP systems are rarely used in practice. However, these systems have proven to be particularly valuable in the financial sector. Banking applications need to be able to reliably debit and transfer funds from accounts. They depend on consistency and partition tolerance to prevent accounting errors – even in the event of disruptions in data traffic.